Biography

Jian Wu received his B. S. in Electronic and Information Engineering and M. S. in Communication and Information System from Huazhong University of Science and Technology, Wuhan, China. His Ph. D. research interests include robust signal processing development for Wearable IMU sensors, activity and gesture recognition, sensor fusion and sensor system design and development. He has authored or co-authored 19 papers and 2 patents, and actively serves as a reviewer for multiple journals and conferences. His research on American Sign Language recognition received second place (out of 276 teams) of Texas Innovation Challenge North America in 2015. He did an internship at Facebook and designed hardware of body composition measurement unit for TI which was showcased at CES and is used as a reference design by TI.

Google Scholar Link: https://scholar.google.com/citations?user=YZtL62gAAAAJ&hl=en

Education

- Ph. D. Computer Engineering, Texas A&M University, College Station, TX

- M.S. Electrical Engineering, Huazhong University of Science and Technology, Wuhan, China

- B.S. Electrical Engineering, Huazhong University of Science and Technology, Wuhan, China

Research Abstract

Activity and gesture recognition using wearable inertial measurement units (IMUs) provides important context for many ubiquitous sensing applications. Such systems are gaining popularity due to their minimal cost and ability to provide sensing functionality at any time and place. However, several factors can affect the system performance such as sensor location and orientation displacement, activity and gesture inconsistency, movement speed variation and lack of tiny motion information. In my research, different signal processing solutions were developed to address these challenges. Firstly, zero-effort opportunistic sensor orientation and location displacement calibration algorithms were proposed leveraging environmental camera information. Secondly, a robust orientation-independent speed-independent recognition algorithm was developed to work with arbitrary sensor orientation. Thirdly, a sensor fusion approach was introduced for American Sign Language recognition.

Projects

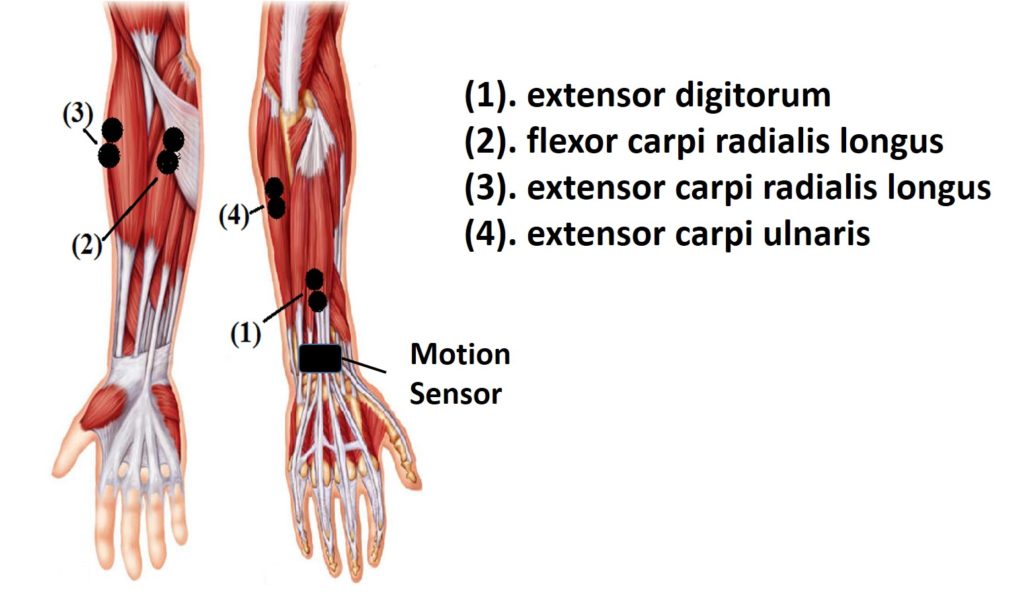

American Sign Language Recognition using Wearable IMU and sEMG

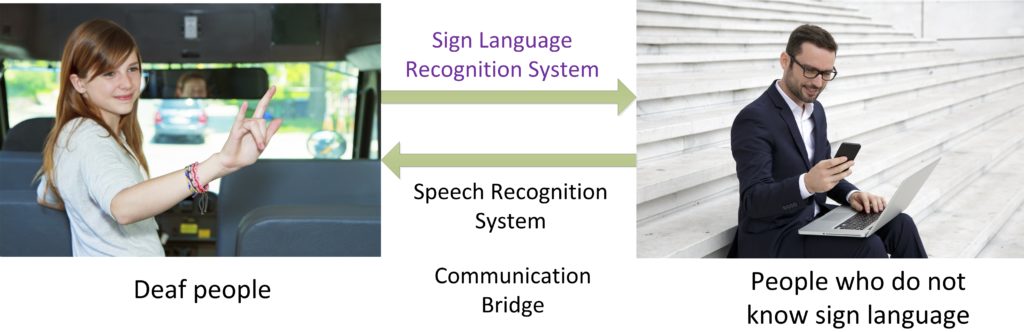

A sign language is a language which uses manual communication to convey meaning, as opposed to acoustically conveyed sound patterns. It is a natural language widely used by deaf people to communicate with each other. However, there are communication barriers between hearing people and deaf individuals either because signers may not be able to speak and hear or because hearing individuals may not be able to sign. This communication gap can cause a negative impact on lives and relationships of deaf people. A sign language recognition (SLR) system is a useful tool to enable communication between deaf people and hearing people who do not know sign language by translating sign language into speech or text. In this project, I designed an American Sign Language recognition system using wearable IMU and sEMG sensors. This is the first time such system is proposed and design for American Sign Language Recognition. Our system achieves 96.16% average accuracies for intra-subject testing for 80 signs.

This project won the second place in Texas Instrument Innovation Challenge North America (out of 276 teams). It is widely reported by mainstream medias including Reuters, NY Times and so on. Here is the original report video from Reuters: https://www.reuters.com/video/2015/11/24/wearable-tech-to-decode-sign-language?videoId=366438327

A demonstration video of our system is shown below.

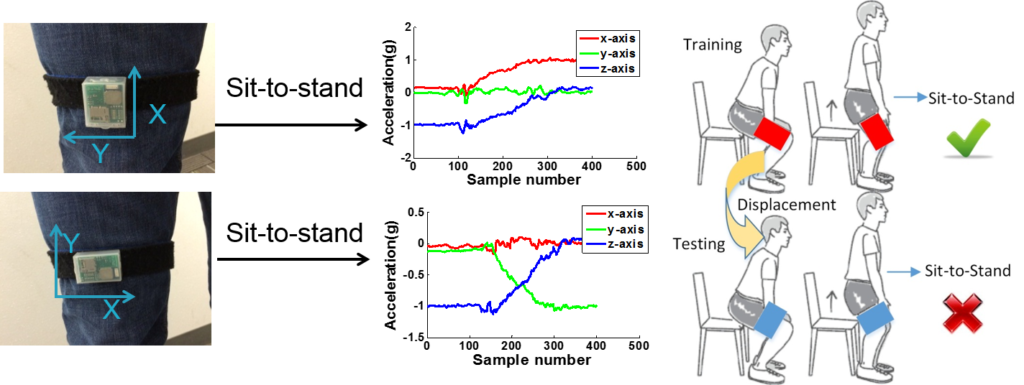

Robust Orientation-independent Activity/Gesture Recognition Using Wearable IMU Sensors

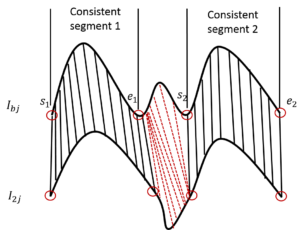

Activity/gesture recognition using wearable motion sensors, also known as inertial measurement units (IMUs), provides an important context for many ubiquitous sensing applications. The design of the activity/gesture recognition algorithms typically requires information about the placement and orientation of the IMUs on the body, and the signal processing is often designed to work with a known orientation and placement of the sensors. However, sensors could be worn or held differently. Therefore, signal processing algorithms may not perform as well as expected. In this paper, we present an orientation independent activity/gesture recognition approach by exploring a novel feature set that functions irrespective of how the sensors are oriented. A template refinement technique is proposed to determine the best representative segment of each gesture thus improving the recognition accuracy. Our approach is evaluated in the context of two applications: activity of daily living recognition and hand gesture recognition. Our results show that our approach achieves 98.2% and 95.6% average accuracies for subject dependent testing of activities of daily living and gestures, respectively. Here is a video that shows our system could detect several activities of daily living with arbitrary sensor orientation: https://www.youtube.com/watch?v=1tpCQhpwXCc&t=30s

In this work, the direction of the gesture is also detected with magnetometer independent of the sensor orientation. This information is useful when the wearable based gesture recognition is used as gesture interface with smart devices. The system knows which device the user is trying to interact and responds correspondingly. Here is a video demonstration that shows our algorithm can be used to interact with smart devices placed at different location in a room.

Opportunistic Sensor Orientation and Location displacement Calibration

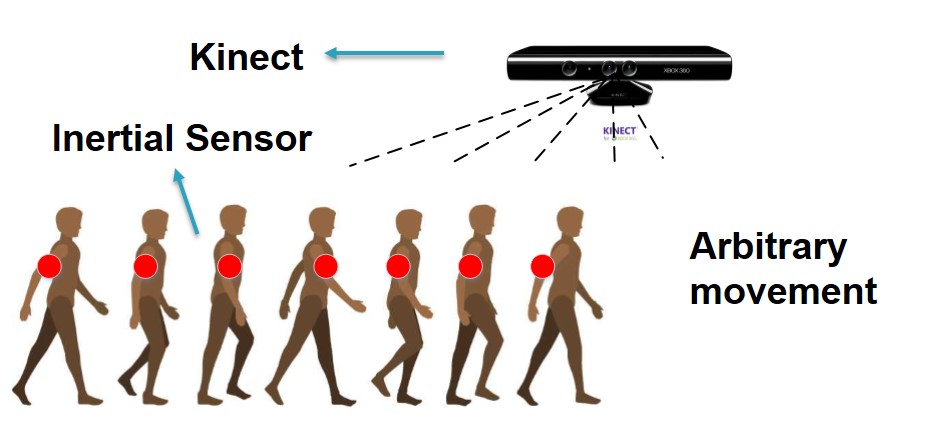

To address the challenges of the sensor orientation and location displacement for activity/gesture recognition, I also proposed techniques to calibrate the sensor orientation and location displacement leveraging the environmental camera information. The calibration of the sensor orientation and location displacement is done seamlessly as the user presents in front of the camera and performs arbitrary movements. For sensor orientation displacement calibration, I proposed a two-step search algorithm. For the location calibration, I proposed a cascade classifier to identify the location of the wearable sensors on the body.

Upper Body Motion Capture System Using IMU Sensors

Motion capture plays an important role in the fields of gaming, film-making, animation and human computer interaction. The existing commercial motion capture systems are mainly based on multiple high resolution cameras, e.g. Vicon and OptiTracker. These system require cameras to be mounted in a controlled environment and can only capture makers in clear view, resulting in poor rendition of many activities. The low cost vision based systems (e.g. Kinect) suffer from similar limited sensing range. Instead, the wearable sensors capable of capturing movements are gaining popularity due to their minimal cost and their ability to provide sensing opportunities at any time and place. Here is the demonstration video.

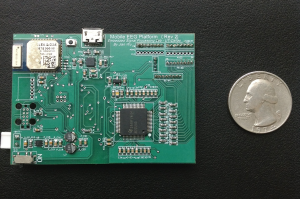

ESP Wearable Sensor Platform Design and Development

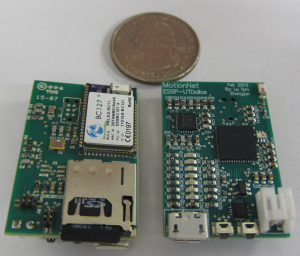

MotionNet

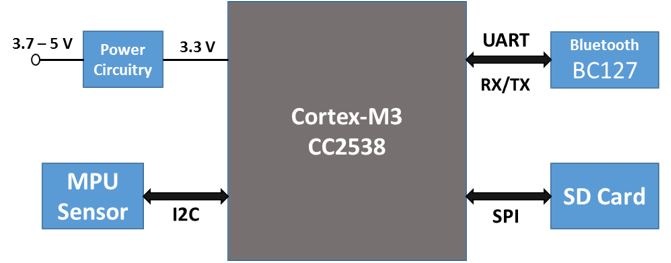

I was in charge of the system design and development of ESP MotionNet. MotionNet is a sensor platform which consists of motion sensing module, IEEE 802.15.4 based 6 LowPAN module (popular component of IoT), Dual mode Bluetooth, SD card storage module and analog input interface. Cortex-M3 based CC2538 (TI) is the low power processor in the platform. The power management unit and a charging circuit are also included. It can be used for different applications. Motion tracking and long-term movement monitoring as well as Beacon proximity are the most popular applications.

Physiological Signal Acquisition Platform

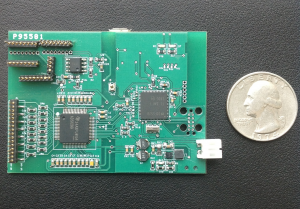

I was involved in the physiological signal acquisition system design and development. I was responsible for hardware design and testing. This system incorporates two daisy-chained TI ADS1299 analog front ends for 16-channel EEG, the TI MSP430 microcontroller and a BlueRadios dual mode Bluetooth radio for wireless communication of the data to a PC. A gain of 1 is used on the ADS1299 differential amplifiers so as not to saturate them. The board is battery powered and can recharge the battery using a micro-USB interface. This system is extensively used for the EEG, ECG or sEMG related research in our lab.

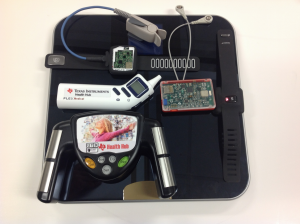

Texas Instrument Reference Design

I was involved in the reference design for cutting-edge analog front end design by Texas Instrument. This include several devices that measures a set of physiological parameters including body fat, body weight, blood oxygenation, step count and ECG. I was responsible for the hardware design and testing for body composition measurement unit. I was also involved in the system design and testing. I demonstrated this reference design at CES with TI collaborators.

Industry Internship

I did an internship at Facebook, Menlo Park in Summer 2016. The project I did was corpus subtopic discovery and evaluation of two word embedding models. Corpus subtopic discovery aims to automatically discover interesting subtopics for a given corpus which include a lot of paragraphs or sentences of text that talk about a certain topic. Before I joined this project, our group already had a framework for corpus subtopic discovery. However, it did not give good performance in reality and there is no evaluation method to quantitatively tell how well the system performs when different algorithms are applied. I developed two keyword-guided evaluation datasets and improved the system accuracy by 12% and reduced computational time by 30 minute (from one hour) for each topic.

Publications

Journal Papers

J1: Terrell R. Bennett, Jian Wu, Nasser Kehtarnavaz, Roozbeh Jafari, Inertial Measurement Unit-Based Wearable Computers for Assisted Living Applications: A signal processing perspective, IEEE Signal Processing Magazine, vol. 33, issue 2, March 2016.

J2: Terrell Bennett, Hunter Massey, Jian Wu, Syed Ali Hasnain, Roozbeh Jafari, MotionSynthesis Toolset (MoST): An Open Source Tool and Dataset for Human Motion Data Synthesis and Validation, IEEE Sensors Journal (SENSORS), vol. 16, no. 13, pp. 5365-5375, July 2016.

J3: Jian Wu, Lu Sun, Roozbeh Jafari, A Wearable System for Recognizing American Sign Language in Real-time Using IMU and Surface EMG Sensors, IEEE Journal of Biomedical and Health Informatics (J-BHI), vol. 20, no. 5, pp. 1281-1290, September 2016.

J4: Jian Wu, Roozbeh Jafari, Seamless Vision-assisted Placement Calibration for Wearable Inertial sensors, ACM Transactions on Embedded Computing Systems (TECS), vol. 16, issue 3, pp. 71:1-71:22, July 2017.

J5: Jian Wu, Roozbeh Jafari, Orientation Independent Activity/Gesture Recognition Using Wearable Motion Sensors, IEEE Internet of Things Journal, 2018.

Conference and Workshop Papers

C1: Terrell R. Bennett, Claudio Savaglio, David Lu, Hunter Massey, Xianan Wang, Jian Wu, Roozbeh Jafari, MotionSynthesis Toolset (MoST): A Toolset for Human Motion Data Synthesis and Validation, MobileHealth, August 11-14, 2014, Philadelphia, PA, USA.

C2: Jian Wu, Roozbeh Jafari, Zero-Effort Camera-Assisted Calibration Techniques for Wearable Motion Sensors, ACM International Conference on Wireless Health, October 29-31, 2014, Bethesda, MD, USA.

C3: Jian Wu, Zhongjun Tian, Lu Sun, Leonardo Estevez, Roozbeh Jafari, Real-time American Sign Language Recognition Using Wrist-worn Motion and Surface EMG Sensors, IEEE Body Sensor Networks Conference (BSN), June 9-12, 2015, Cambridge, MA, USA.

C4: Yashaswini Prathivadi, Jian Wu, Terrell R. Bennett, Roozbeh Jafari, Robust Activity Recognition using Wearable IMU Sensors, IEEE Sensors, November 3-5, 2014, Valencia, Spain.

C5: Rajesh Kuni, Yashaswini Prathivadi, Jian Wu, Terrell R. Bennett, Roozbeh Jafari, Exploration of Interactions Detectable by Wearable IMU Sensors. IEEE Body Sensor Networks Conference (BSN), June 9-12, 2015, MIT, Cambridge, USA.

C6: Jian Wu, Reese Grimsley, Roozbeh Jafari, A Robust User Interface for IoT using Context-aware Bayesian Fusion, IEEE International Conference on Wearable and Implantable Body Sensor Networks (BSN), March 4-7, 2018, Las Vegas, NV, USA.

C7: Jian Wu, Soumya Jyoti Behera, Radu Stoleru, Meeting Room State Detection using Environmental Wi-Fi Signature, Proceedings of the 15th ACM International Symposium on Mobility Management and Wireless Access, Pages 9-16, November 21-25, Miami, FL, USA.

C8: Jian Wu, Ali Akbari, Reese Grimsley, Roozbeh Jafari, A Decision Level Fusion and Signal Analysis Technique for Activity Segmentation and Recognition on Smart Phones, ACM SHL Recognition Challenge in sixth International Workshop on Human Activity Sensing Corpus and Applications, in conjunction with UbiComp, October 12, 2018, Suntec City, Singapore.

C9: Ali Akbari, Jian Wu, Reese Grimsley, Roozbeh Jafari, Hierarchical Signal Segmentation and Classification for Accurate Activity Recognition, ACM SHL Recognition Challenge in sixth International Workshop on Human Activity Sensing Corpus and Applications, in conjunction with UbiComp, October 12, 2018, Suntec City, Singapore.

Book Chapters

BC1: Vitali Loseu, Jian Wu, Roozbeh Jafari, Mining Techniques for Body Sensor Network Data Repository, Edited by Edward Sazonov and Micheal Neuman, Wearable Sensors: Fundamentals, Implementation and Applications, Elsevier, 2014, ISBN 9780124186620.

BC2: Jian Wu, Roozbeh Jafari, Wearable Computers for Sign Language Recognition, Handbook of Large-Scale Distributed Computing in Smart Healthcare, Springer, 2017.

Short Papers

SP1: Viswam Nathan, Jian Wu, Chengzhi Zong, Yuan Zou, Omid Dehzangi, Mary Reagor, Roozbeh Jafari, Demonstration Paper: A 16-channel Bluetooth Enabled Wearable EEG Platform with Dry-contact Electrodes for Brain Computer Interface, ACM International Conference on Wireless Health, November 1-3, 2013, Baltimore, MD, USA.

SP2: Jian Wu, Zhanyu Wang, Suraj Raghuraman, Balakrishnan Prabhakaran, Roozbeh Jafari, Demonstration abstract: Upper Body Motion Capture System Using Inertial Sensors, ACM/IEEE International Conference on Information Processing in Sensor Networks (IPSN), April 15-17, 2014, Berlin, Germany.

Patents

P1: Roozbeh Jafari, Jian Wu, Context aware movement recognition system, U.S. Patent Application 15/398,392, filed July 6, 2017.

P2: Kehtarnavaz, Nasser, Roozbeh Jarari, Kui Liu, Chen Chen, and Jian Wu. Fusion of inertial and depth sensors for movement measurements and recognition. U.S. Patent Application 15/092,347, filed October 6, 2016.

Contact

Email: jian.wu (at) tamu (dot) edu